Disclaimer: Human-centred AI is a huge field that combines ethics, sutainable development, technology, some pretty advanced computer science, and more. We’ll be talking about the design side of things. AI was used to conduct research for this piece and find sources and facts. All words are my own.

I’ve seen some terrible implementations of AI in firms. I mean truly, truly awful. Imagine using a proprietary AI—a simple GPT wrapper with custom instructions—but for some reason the business decided to build its own front end for it. Now imagine that same firm blocking access to the usual AI tools like Gemini, OpenAI, Claude, Perplexity, and everything in between. Now you’re stuck with an inferior model (Gemini 2.5 Flash, for example) that lives in a terrible proprietary interface.

You can access the chat history but you can’t search it. There is a saved prompt library to save time but you can’t make your own to suit your needs. There is also something called “agents”, which are like Gemni Gems or OpenAI custom GPTs, but again you can’t make your own. The proprietary AI constantly cuts off long responses and you have to re-run the prompt by specifying “continue from bullet point #3,” which costs tokens and your own valuable time.

Frustrating, isn’t it? Shouldn’t happen in 2025, right? Well, you’d be surprised how often this happens.

We could dig into the phenomenon. Why do companies insist on building shitty AIs wrapped in shitty UI? Fears about security, reputational risks, business objectives, all that isn’t that important for this article. It just happens and it sucks. Let’s try and fix it.

I can’t believe I’m saying this in 2025 but the secret sauce is involving your users at every step. Welcome back to 1982. Blade Runner is in the cinemas, anthropomorphising the humanity’s fear of advanced AI. IBM, already a computing giant, is democratising technology by putting a computer on every desk. Michael Cooley writes a short book called “Architect or Bee? (The Human Technology Relationship)” in which he coins the term “Human-centred Design” for the first time.

The idea was simple: the technology should be designed to enhance human capabilities, creativity, and problem solving, rather than simply replacing human effort or reducing complex tasks to simple, repetitive actions. The idea endured for 43 years and is now central to the emerging field of Human-Centred AI.

Our personal boulder of Sisyphus

So why then do we keep banging our heads against constant lack of investment, lack of resources, lack of buy-in? I think of Design and I think back to the countless LinkedIn posts and Reddit threads bemoaning the challenges we face in getting buy-in. Design is routinely deprioritised, understaffed, underresourced, and underappreciated. Designers go about their lives championing, advocating, getting alignment, getting buy-in and getting shut out of big strategy meetings.

The reason is as cynical as it is simple. The lack of buy-in is due to the lack of tangible benefit. Human-Centred Design is still seen as a naive, idealistic philosophy, rather than valuable part of the process. McKinsey can write a thousand reports on the business value of design, no one will listen.

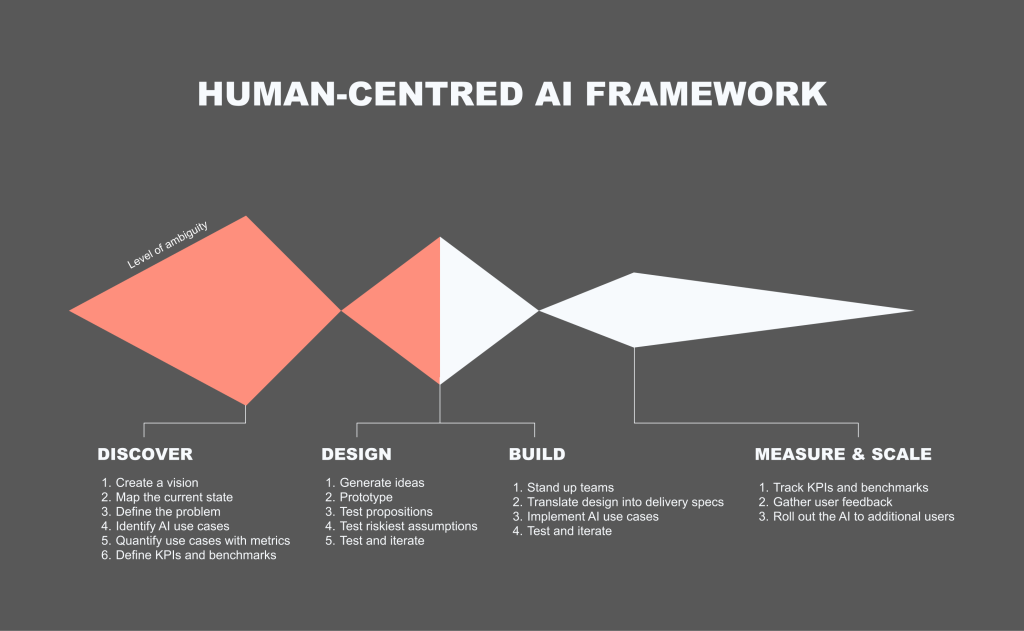

Framework for Human-Centred AI

I’m not re-inventing the wheel here. You might’ve seen variations of this framework everywhere. Double-diamond, tripple-diamond, Alpha-Beta-Live, Imagine-Deliver-Run… the list goes on. The point is that I’m proposing specific methods and activities within each “diamond” that are unique to designing AI products. Read on.

Discover

1. Solve an actual problem

Do. The. Research. Talk to your users. See what their problems actually are. Not doing so is akin to a general sending in his army without reconnaissance. Companies just slap AI on anything these days. That inevitably fails unless you solve an actual problem worth solving with AI.

2. Pick ambitious use cases

AI can do a lot of useful stuff. It can summarise, pull documents, generate content. From talking to your users you’ll have a good idea of what they need. Prioritise the use cases and pick an ambitious one. No point in solving small problems, right?

3. Define KPIs and benchmarks

I like a good old fashioned planning workshop. There is a lot of data out there. Just sit down and do it, however long it takes. A day, two days, a week. It doesn’t matter. If NASA engineers can meticulously plan out 10,000 single points of failure and plan contingencies for each one — so can you map out some KPIs and benchmarks. This is key. The more specific and granular, the better. For example, “we want to improve efficiency of annual reports” is terrible. “We want to reduce the time it takes to produce an annual report from 40 hours to 4 hours, 95% accurate pre- and post- human audit” is much better.

Design

1. Design with users and iterate often

This is also not new. Brainstorm, test, iterate. We’ve been doing this for 40+ years. I usually start with rough concepts, progress to low fidelity prototype (little more than interactive powerpoints), and only then—as the ambiguity reduces with each iteration—move on to fully clickable or functional prototypes.

2. Measure KPIs early

Measure whatever you can measure with prototypes. There are tools to tell you how successful a usability test was: System Usability Scale, my own AI Experience Score (AES), UX-Lite, and many more. The key message is to measure qualitatively to begin with. Forget automated AI benchmarks, we’re not building new models here.

Build

1. Start small

Build quick spikes, functional prototypes, show them to users, get feedback. Start small and scale up. 5 users, 10 users, 100, 1000… and so on. Here’s a cautionary tale I’ve seen across multiple large organisations: teams work in isolation on some proprietary AI tools, teams release their creation to the wider company, the AI tools performs poorly, consultants are brought in to figure out why and create an adoption strategy that’s already build on shaky foundations. This expensive mistake would’ve been avoided completely with a robust ‘test and iterate’ cycle or a closed Beta to gather feedback.

2. Continue to measure KPIs

Continue measuring. Perhaps now we can use AES quantitatively, include analytics. The more data you gather the better. To expand on the pattern I described above: an AI tool is released, adoption and engagement are poor, no one knows why. Consultants are brought in but no usage data or user feedback is available. Consultants are forced to run expensive research to understand why.

Scale

1. Bake in feedback mechanisms

Analytics feedback is great but it won’t tell you why people are doing what they are doing, or what exactly they are frustrated about. We had a discussion about this with a client just a few weeks ago. They saw high drop-off at a certain part of their journey. It was a form field on a certain page during onboarding. The drop-off spiked there and no one knew why. During interviews it turned out that that particular question had nothing to do with it. The culprit was the form field below, which was unusable and unaccessible. Analytics data will only tell you so much, user research is the thing that will help you pin-point the problem.

2. Measure ROI

This is where we get to the meat of the matter. Hopefully you’ve been collecting data and measuring progress this entire time. Now it’s time for that presentation back to the board. Let’s talk more about that in the next section.

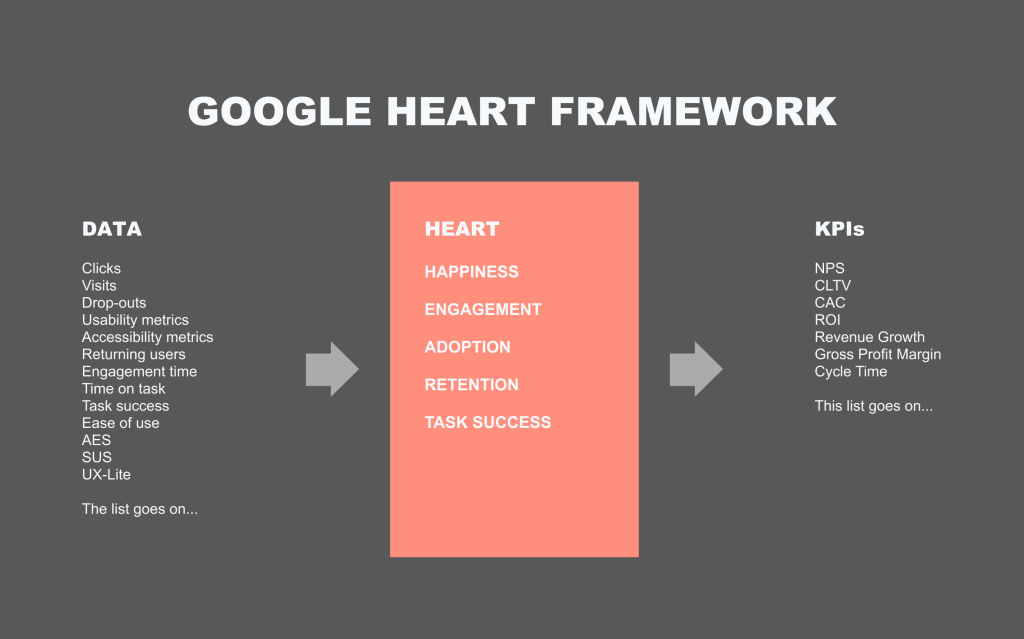

Measuring ROI

I love the Google HEART framework. It ties subjective terms like “happiness” with objective measures, like “satisfaction scores.” It also allows you to track the impact of design on the bottom line. You can read more about the Google HEART framework here.

I’ll give you an example. We were building a new product. I called in a workshop. In it we had representatives from design, product, technology, analytics, and data science. We went through the exercises and design ended up being responsible for “Happiness.” We measured it by taking our overall satisfaction score, which we measured with usability studies and contextual surveys on site. I aggregated a whole bunch of various usability metrics into an overall Happiness score, which was displayed on the Google Analytics dashboards for the whole business to see. The data science team then used this aggregated score to measure its impact on a) downstream KPIs (e.g., CLTV) and b) the gross revenue. For the first time we could improve something on the site and see that, for example, it resulted in a 2% uplift in revenue. This was huge. The principle is the same for AI. Plan the metrics in advance and find a way to tie them to the bottom line. Suddenly all ROI calculations become a lot easier.

Conclusion

If you want to succeed in this AI race we’re seeing, then a Human-Centred approach is what’s going to get you there. Start with a vision, speak to actual users, map out the whole process, test and iterate often, plan KPIs in advance and measure them relentlessly throughout. Let’s avoid a future where companies pour money into crappy AI that benefits no one.